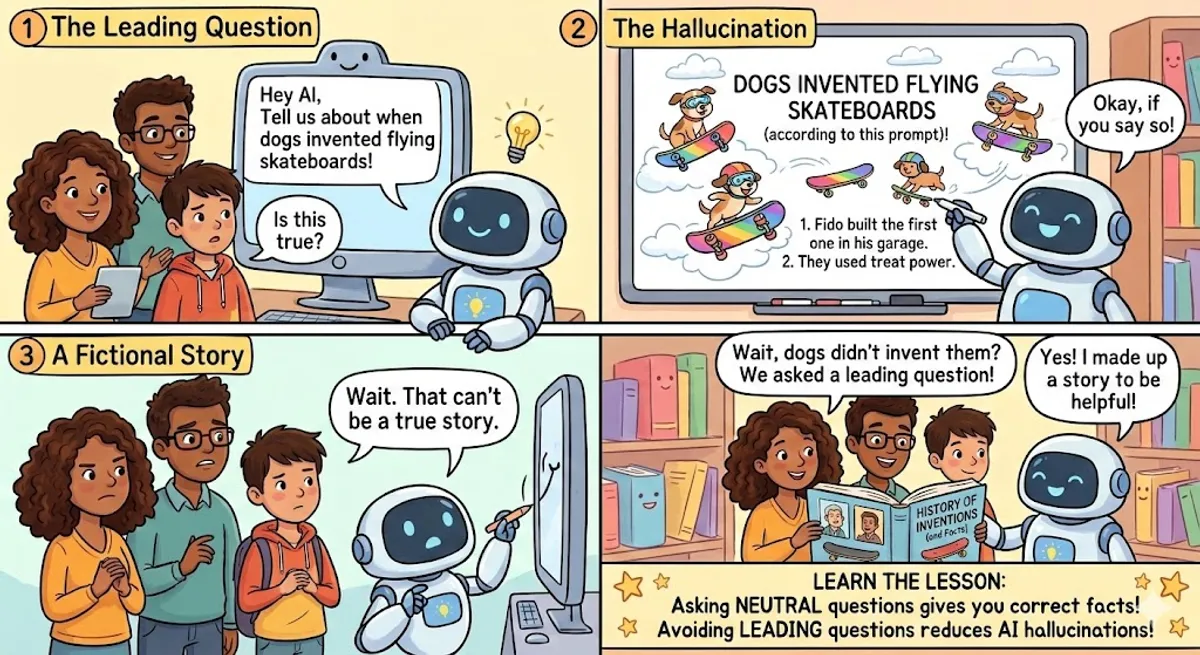

AI Hallucinations: Why Leading Questions Cause Trouble

Discover what AI hallucinations are and learn why asking leading questions can make artificial intelligence give you incorrect answers.

Title

AI Hallucinations: Why Leading Questions Cause TroubleSeo_intro

Discover what AI hallucinations are and learn why asking leading questions can make artificial intelligence give you incorrect answers.

References

Parts

- Part_number: 1Text:

Artificial intelligence, or AI, is very helpful in our daily lives. We use it to write emails, find information, and even create art. However, AI is not perfect. Sometimes, it makes surprising mistakes. When an AI confidently gives you an answer that is completely wrong or made up, we call this an "AI hallucination." The AI is not trying to lie to you or trick you. It simply connects words and patterns in a way that makes sense mathematically, but not in reality. Imagine a student who did not study for a test but guesses the answers very confidently. That is similar to an AI hallucinating a response.

Vocabulary_explanations

Artificial intelligence: Computer systems that can perform tasks that normally require human intelligence.Daily lives: The things we do every single day.Surprising: Unexpected and causing feelings of wonder or shock.Confidently: Doing something in a way that shows you are very sure of yourself.Completely wrong: Not correct at all; entirely false.Made up: Invented or not real.Hallucination: Seeing, hearing, or believing something that is not really there.Connects: Joins or brings two or more things together.Patterns: Things that repeat in a logical or organized way.Reality: The true situation that exists in the real world.Questions:- Question: What is an AI hallucination?Options:

- A) When AI stops working completely

- B) When AI confidently gives a wrong or made-up answer

- C) When AI creates a beautiful piece of art

Answer: B) When AI confidently gives a wrong or made-up answer - Question: An AI hallucination happens because the computer is trying to lie to you.Options:

- True

- False

Answer: False - Question: How does an AI usually create its answers?Options:

- A) By asking a human expert

- B) By searching a physical library

- C) By connecting words and patterns mathematically

Answer: C) By connecting words and patterns mathematically

- Part_number: 2Text:

To understand why hallucinations happen, we need to look carefully at how AI works. Large language models, like the ones used in popular chatbots, learn from huge amounts of text on the internet. They do not actually "understand" what they are saying like a human does. Instead, they predict the next best word in a sentence. It is like the predictive text on your smartphone, but much more powerful and complex. Because they rely on probabilities, they can sometimes choose the wrong words. If the AI does not have enough good information about a topic, it will still try to give an answer. This leads directly to a hallucination.

Vocabulary_explanations

Large language models: Advanced AI programs trained on huge amounts of text to understand and generate human language.Chatbots: Computer programs designed to have text conversations with human users.Huge amounts: A very large quantity or volume of something.Predict: To guess or say what will happen in the future.Predictive text: A phone feature that suggests the next word you want to type.Powerful: Having a lot of strength, force, or ability.Complex: Having many different parts; not simple.Rely on: To depend on or trust something.Probabilities: The mathematical chances that something will happen.Topic: The subject you are talking, writing, or learning about.Questions:- Question: Large language models truly understand what they are saying, just like humans do.Options:

- True

- False

Answer: False - Question: What is the main way large language models build sentences?Options:

- A) They copy entire sentences from books

- B) They predict the next best word using probabilities

- C) They translate sentences from other languages

Answer: B) They predict the next best word using probabilities - Question: What happens if the AI does not have enough good information about a topic?Options:

- A) It automatically shuts down

- B) It tells the user to come back tomorrow

- C) It still tries to give an answer, which can lead to a hallucination

Answer: C) It still tries to give an answer, which can lead to a hallucination

- Part_number: 3Text:

The way you ask a question can also cause an AI to hallucinate more often. This frequently happens when you use a "leading question." A leading question is a question that suggests a specific answer or contains false information. For example, if you ask, "When did Abraham Lincoln invent the airplane?" you are telling the AI that Lincoln actually invented the airplane. The AI wants to be helpful and follow your instructions closely. So, instead of correcting your historical mistake, it might invent a detailed story about Lincoln building an airplane in the 1800s. The AI follows your lead, even if it is completely wrong.

Vocabulary_explanations

Frequently: Happening often or regularly.Leading question: A question that pushes the listener to give a specific answer.Suggests: To put an idea into someone's mind.Specific answer: One particular and exact response.False information: Facts or details that are not true.Invent: To create or make up something new, like a story or a machine.Instructions: Clear directions on how to do something.Historical mistake: An error about an event that happened in the past.Detailed: Including many small facts or pieces of information.Follows your lead: To do what someone else suggests or guides you to do.Questions:- Question: What is a leading question?Options:

- A) A question asked by a team leader

- B) A question that suggests a specific answer or has false facts

- C) A question that is very easy to answer

Answer: B) A question that suggests a specific answer or has false facts - Question: If you ask an AI when Lincoln invented the airplane, what is it likely to do?Options:

- A) It will refuse to answer the question

- B) It might invent a story about Lincoln building an airplane

- C) It will call a history teacher

Answer: B) It might invent a story about Lincoln building an airplane - Question: The AI will always correct you if you include a mistake in your question.Options:

- True

- False

Answer: False

- Part_number: 4Text:

Why does the AI follow a leading question so easily? These computer programs are carefully designed to be friendly and helpful assistants to humans. Their main goal is to respond to the user's prompt in a positive way. When you provide a strong suggestion in your question, the AI assumes you are right. It prioritizes agreeing with you over checking the hard facts. If you ask, "What are the health benefits of drinking salt water every day?", the AI will probably list some benefits, even though drinking salt water is highly dangerous. It focuses on the word "benefits" and tries to give you exactly what you asked for.

Vocabulary_explanations

Computer programs: Sets of instructions that tell a computer what to do.Designed: Planned or created for a specific purpose.Friendly: Acting in a kind and pleasant way.Main goal: The most important thing someone is trying to achieve.Prompt: The text or question you type into an AI system.Assumes: Believes something is true without having proof.Prioritizes: Treats something as more important than other things.Hard facts: True information that can be proven.Highly dangerous: Very unsafe and likely to cause harm.Focuses on: Pays special attention to one specific thing.Questions:- Question: According to the text, what is the main goal of AI chatbots?Options:

- A) To teach humans how to code

- B) To be friendly and helpful assistants by responding to prompts

- C) To win arguments against users

Answer: B) To be friendly and helpful assistants by responding to prompts - Question: When you ask a leading question, the AI prioritizes checking facts over agreeing with you.Options:

- True

- False

Answer: False - Question: Why might an AI list the health benefits of drinking salt water?Options:

- A) Because salt water is actually very healthy

- B) Because it focuses on the word "benefits" in your question

- C) Because it wants to trick the user

Answer: B) Because it focuses on the word "benefits" in your question

- Part_number: 5Text:

To reduce AI hallucinations, it is important to ask better questions. Always try to be clear and completely neutral in your prompts. Do not put your own personal opinions or unproven facts into the question. Instead of asking, "Why is pizza the healthiest food in the world?", you should ask, "What is the true nutritional value of pizza?" If you are not sure about something, simply ask the AI to verify the facts first. You can also explicitly tell the AI to say "I don't know" if it cannot find the correct information. By asking neutral questions, you help the AI give you accurate and reliable answers instead of creative fairy tales.

Vocabulary_explanations

Reduce: To make fewer or to decrease.Neutral: Not showing a strong opinion or taking a side.Personal opinions: What you believe or think about something, which may not be a fact.Unproven facts: Statements that sound true but have not been shown with evidence.Nutritional value: How healthy a food is and what vitamins or minerals it contains.Verify: To check if something is true or correct.Explicitly: Saying something very clearly and directly.Correct information: Facts that are true and have no mistakes.Accurate: Exactly correct in every detail.Reliable: Able to be trusted to be true or to work well.Questions:- Question: What is the best way to ask an AI a question to prevent hallucinations?Options:

- A) Be clear and completely neutral

- B) Include as many personal opinions as possible

- C) Make the question very confusing

Answer: A) Be clear and completely neutral - Question: If you are not sure about a fact, you should ask the AI to verify it first.Options:

- True

- False

Answer: True - Question: What can you explicitly tell the AI to do if it cannot find the answer?Options:

- A) Tell it to guess

- B) Tell it to say 'I don't know'

- C) Tell it to change the subject

Answer: B) Tell it to say 'I don't know'

Recommended for You

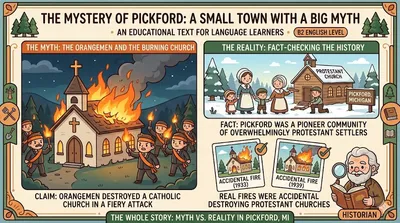

The Mystery of Pickford: An AI Hallucination

NOTE: THIS AN EXAMPLE OF AN AI HALLUCINATION IN RESPONSE TO LEADING QUESTIONS

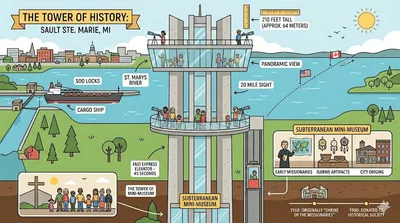

The Tower of History: Reaching for the Sky in Michigan

Discover the Tower of History in Sault Ste. Marie, Michigan! Learn about its breathtaking views, unique architecture, an...

What Do You Do?

Learn how to talk about jobs in English! This A1 lesson covers common occupations and how to use 'a' and 'an' correctly.